Artificial Intelligence (AI) is transforming everything—from how we work and shop to how doctors diagnose diseases and governments make decisions. But as AI systems grow more powerful, they also become juicier targets for cyberattacks.

Imagine a hacker tampering with an AI that approves loans, controls smart factories, or even guides medical treatments. The consequences could be catastrophic.

That’s where Zero Trust security comes in—not just as a buzzword, but as a critical shield for the AI era.

Let’s explore how Zero Trust principles are being applied to protect AI systems, why it’s essential, and what it means for businesses and users alike.

🔍 First: What Is Zero Trust Again?

Zero Trust is a security model built on one core idea:

“Never trust, always verify.”

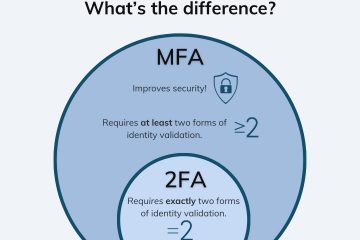

Instead of assuming everything inside a network is safe, Zero Trust treats every user, device, and request as potentially risky—no matter where it comes from. Access is granted only after strict verification, and only to the minimum needed.

Now, apply that mindset to AI—and you get a powerful defense strategy.

🤖 Why AI Needs Extra Protection

AI systems are uniquely vulnerable because they rely on three key things—all of which can be attacked:

- Data – AI learns from massive datasets. If that data is poisoned or altered, the AI makes bad decisions.

- Models – The AI “brain” itself (the trained model) can be stolen, copied, or tricked.

- Access & Usage – Who can run the AI? What inputs can they send? What outputs do they see?

Without strong safeguards, attackers can:

- Poison training data to bias results

- Steal proprietary AI models (a form of intellectual property theft)

- Trick AI with “adversarial inputs” (e.g., slightly altered images that fool facial recognition)

- Abuse AI APIs to generate spam, deepfakes, or misinformation

This is where Zero Trust steps in.

🛡️ How Zero Trust Secures AI Systems

Here’s how Zero Trust principles are applied across the AI lifecycle:

1. Secure the Data Pipeline

- Verify every data source: Only trusted, authenticated systems can feed data into AI training.

- Encrypt data at rest and in transit: So even if intercepted, it’s unreadable.

- Monitor for anomalies: Sudden changes in data patterns could signal poisoning attempts.

Zero Trust says: “Not all data is trustworthy—even if it comes from ‘inside’ the company.”

2. Protect the AI Model Itself

- Store models in secure, access-controlled environments (like encrypted model registries).

- Use identity-based access: Only authorized developers or apps can deploy or update models.

- Log every interaction with the model—who ran it, when, and with what input.

Think of your AI model like a vault. Zero Trust ensures only verified people get a key—and their actions are recorded.

3. Control AI Access with Least Privilege

- A customer service chatbot shouldn’t have access to payroll data.

- An external partner using your AI API should only get limited, audited access.

- Apply granular permissions: Can this user run the model? View results? Retrain it?

Zero Trust enforces: “You get only what you need—nothing more.”

4. Verify Every AI Request in Real Time

- Before processing an input (like an image or text prompt), verify:

- Who is making the request?

- Is their device secure?

- Is this behavior normal? (e.g., 10,000 requests per minute = red flag)

- Block suspicious queries before they reach the AI.

This stops attackers from probing the AI to reverse-engineer it or exploit weaknesses.

5. Assume Breach—Monitor Continuously

- Use AI-powered security tools to watch for strange activity within the AI system itself.

- If an AI suddenly starts outputting biased or erratic results, trigger an alert.

- Automatically revoke access if a device is compromised.

🌐 Real-World Examples

- Healthcare AI: A hospital uses Zero Trust to ensure only verified doctors can access an AI that diagnoses tumors. Patient data never leaves the secure environment, and every diagnosis is logged.

- Financial Services: A bank applies Zero Trust to its fraud-detection AI. Even internal teams must authenticate and justify access. Training data is scanned for manipulation.

- Cloud AI Platforms: Companies like Microsoft Azure and Google Cloud now offer Zero Trust–enabled AI services, where models run in isolated, identity-governed environments.

💡 Why This Matters to You (Even If You’re Not a Tech Expert)

If you use AI tools at work—or rely on services powered by AI (like smart assistants, recommendation engines, or automated customer support)—you’re affected.

Zero Trust helps ensure that:

- Your personal data isn’t misused by compromised AI

- AI decisions are fair, accurate, and not secretly manipulated

- Businesses don’t suffer breaches that lead to service outages or fraud

In short: Zero Trust makes AI safer, more reliable, and more trustworthy.

✅ The Bottom Line

AI is too important—and too powerful—to leave unprotected. Traditional security models assume safety inside the network. But in a world of remote work, cloud computing, and sophisticated AI attacks, that assumption is dangerous.

Zero Trust doesn’t just guard the door—it verifies every guest, watches every move, and locks away the valuables.

As AI becomes woven into the fabric of our digital lives, combining it with Zero Trust isn’t optional. It’s essential.

🔐 In the age of AI, trust nothing. Verify everything.

0 Comments