In early 2025, a video of a major European CEO appeared online, announcing a “strategic partnership” with a foreign tech firm. Stock prices surged—until the company confirmed the video was fake. The CEO never made the statement. It was a deepfake—a hyper-realistic synthetic media created by artificial intelligence.

Once confined to Hollywood and research labs, deepfakes are now accessible to anyone with a smartphone and internet connection. In 2026, they pose serious threats to personal privacy, corporate security, democracy, and truth itself.

This guide explains what deepfakes are, how they’re made, real-world risks, and—most importantly—how you can detect and defend against them.

What Is a Deepfake?

A deepfake is synthetic media (video, audio, or image) generated or manipulated using deep learning, a subset of AI. The term combines “deep learning” and “fake.”

Unlike basic photo filters or voice changers, deepfakes can:

- Swap one person’s face onto another’s body in real time

- Clone a person’s voice from just 3 seconds of audio

- Generate entirely new footage of someone saying or doing things they never did

🎭 Key insight: Deepfakes don’t just edit—they invent realistic content that never existed.

How Do Deepfakes Work? (The Technical Breakdown)

1. Core Technology: Generative Adversarial Networks (GANs)

Most deepfakes rely on GANs, which involve two neural networks competing:

- Generator: Creates fake images/videos

- Discriminator: Tries to spot fakes

They train against each other—like a forger and an art expert—until the generator produces fakes the discriminator can’t distinguish from real data.

2. Data Collection

To create a convincing deepfake of a person, the AI needs:

- Hundreds of images or videos of their face from different angles

- Audio samples (for voice cloning)

- Publicly available data (social media, interviews, YouTube) is often enough

3. Training & Rendering

- The model learns facial landmarks, expressions, speech patterns, and lighting.

- Once trained, it can animate the target face to match new audio or script.

- Modern tools (like HeyGen, D-ID, or open-source Wav2Lip) can generate deepfakes in minutes.

4. Voice Cloning

Tools like ElevenLabs or Resemble AI use text-to-speech (TTS) models fine-tuned on a person’s voice. With just 30 seconds of clean audio, they can replicate tone, accent, and emotion.

💡 Example: In 2023, scammers used an AI-cloned voice of a CEO to trick a finance manager into wiring $25 million.

Major Deepfake Security Risks (With Real Examples)

1. Financial Fraud & CEO Impersonation

- How it works: Scammers call employees with a cloned executive voice:

“This is Sarah from Finance. I need you to approve an urgent wire transfer.” - Real case: In 2024, a UK energy firm lost $243,000 after a deepfake audio call impersonated the parent company’s CEO.

2. Political Disinformation

- How it works: Fake videos of politicians making inflammatory statements go viral before fact-checkers respond.

- Real case: During the 2024 Indian elections, a deepfake video of a candidate “confessing” to corruption spread to 10M+ people in 48 hours—swaying voter sentiment.

3. Reputation Damage & Blackmail

- How it works: Malicious actors create fake explicit videos of individuals (often women) and threaten to release them unless paid.

- Real case: In 2025, over 12,000 deepfake pornographic videos were reported on Telegram—95% featured non-consensual likenesses of real people.

4. Corporate Espionage & Sabotage

- How it works: Fake internal memos or executive announcements cause stock drops or employee panic.

- Real case: A fake video of a pharmaceutical CEO announcing a drug recall caused a 17% stock plunge before being debunked.

5. Social Engineering at Scale

- How it works: AI-generated customer service agents call thousands of people, using cloned voices of trusted brands to steal credentials.

- Real case: In 2026, a U.S. bank warned customers about deepfake calls mimicking its fraud department to extract OTPs (one-time passwords).

How to Detect Deepfakes: Red Flags to Watch For

While AI improves, most deepfakes still have subtle flaws:

🔍 Visual Cues (Video)

- Inconsistent lighting on the face vs. background

- Unnatural blinking (too little or too much)

- Blurry edges around the face, hair, or neck

- Mismatched lip movements (especially in non-native languages)

- Stiff or robotic head movements

🔊 Audio Cues (Voice)

- Flat emotional tone (even when expressing excitement or anger)

- Slight delays between words

- Unnatural pronunciation of uncommon words

- Background noise inconsistencies

🌐 Contextual Clues

- The video appears only on obscure websites or social media—not official channels

- No corroborating reports from trusted news sources

- The claim is designed to provoke strong emotion (fear, outrage)

⚠️ Warning: Detection is getting harder. By 2026, many deepfakes pass casual inspection.

How to Verify Information in the Age of Deepfakes

✅ 1. Use Reverse Image/Video Search

- Google Lens, InVID, or TinEye can find earlier versions or source material.

- If the “breaking news” video has no prior appearances, be suspicious.

✅ 2. Check Official Channels

- Did the person or organization confirm the statement on their verified website or social media?

- Legitimate announcements are rarely only on TikTok or anonymous Telegram channels.

✅ 3. Look for Digital Watermarks

- Platforms like YouTube, Meta, and TikTok now embed Content Credentials (via C2PA standard) showing if media is AI-generated.

- Look for labels like “Edited,” “AI-Generated,” or “Altered.”

✅ 4. Use Detection Tools (Cautiously)

- Microsoft Video Authenticator (analyzes for deepfake artifacts)

- Reality Defender (enterprise tool for real-time detection)

- Amber Authenticate (for journalists)

⚠️ Note: No tool is 100% reliable—use as one signal among many.

✅ 5. Practice Media Literacy

- Ask: Who benefits from this going viral?

- Slow down before sharing emotionally charged content.

- Cross-reference with multiple trusted sources.

Protecting Yourself and Your Organization

For Individuals:

- Limit public photos/videos (reduce training data for impersonators)

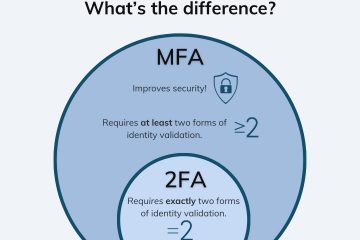

- Enable multi-factor authentication (MFA) on all accounts

- Never share sensitive info based on a voice/video call alone—verify via a second channel

For Businesses:

- Train employees on deepfake phishing (include voice/video in security drills)

- Establish verbal code words for financial approvals

- Use digital identity verification for high-stakes transactions

For Everyone:

- Support legislation requiring AI disclosure (e.g., EU AI Act, U.S. DEEPFAKES Accountability Act)

- Demand transparency from social media platforms

The Future: Can We Trust Anything We See?

Deepfakes are not going away. As AI advances, the line between real and synthetic will blur further. But this doesn’t mean we surrender to deception.

Instead, we must shift from trusting media to verifying provenance. The goal isn’t to spot every fake—it’s to build systems where authenticity is provable.

🔐 Final thought: In 2026, critical thinking is your best defense.

Question. Verify. Delay sharing.

Because in the age of AI, seeing is no longer believing—but verifying is empowering.

0 Comments